I was asked by Yael Claire of Spiritwo to put together an interactive audio visual performance environment for her show at SPILL Festival 2014.

The environment would be simple to use, controlled via a roland midi pedal board and a microsoft Kinect and would require no direct computer interaction on her behalf. The environment allowed for the sequencing and playback of a collection of videos and audio, as well as a separate interactive animated openGL plane.

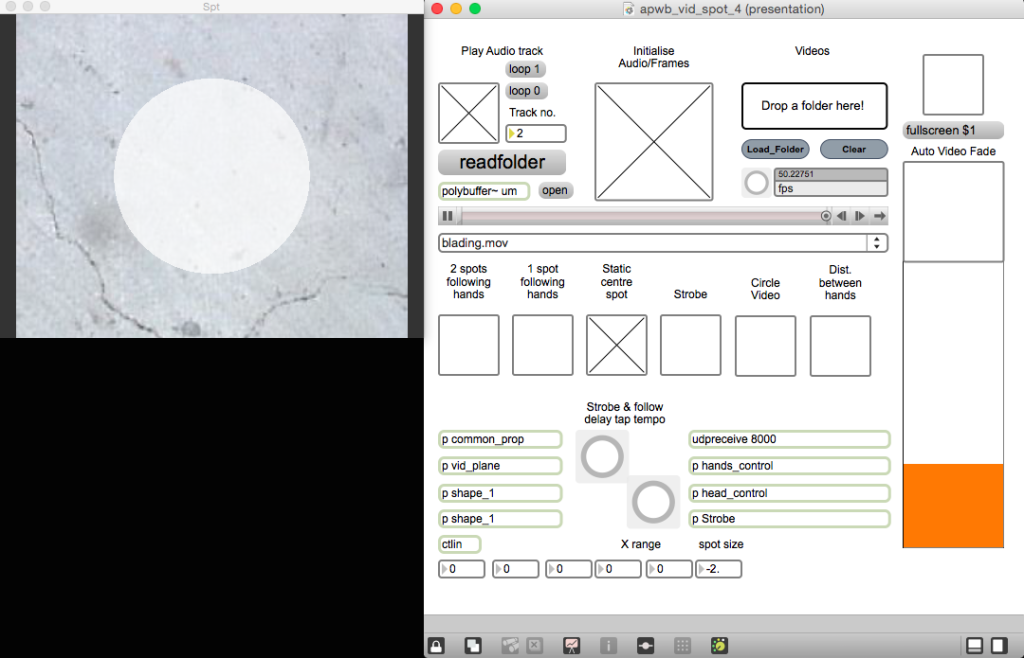

The animated plane would revolve around a spotlight theme and would contain several different modes of interaction; such as a single spotlight following the body, two following each hand, a spot light growing in size relative to distance between each hand, a strobing effect and ability to texture the video over the animated plane directly. Blending between the video and animated plane was controlled automatically based on start/end points of the video material, however there was also a manual control to allow Claire to blend between modes at will, as well as setting the time delay between her movement and the animations.

Using my previous experience and work with the Kinect from GAIT/GATE I tracked Claire’s hands and body position via skeleton tracking. The tracking data was then sent into Max/MSP to control the animated plane, along with the midi data from the foot pedals to control playback modes. I choose to build in Max/MSP rather than C++ based on the constraints of the project (time and budget) as there is greater flexibility in terms of experimenting and prototyping. The finished patch was designed to be simple to use, required little to no knowledge to run, and could be re-used again and again with a variety of different audio or video material.